Personal updates

-

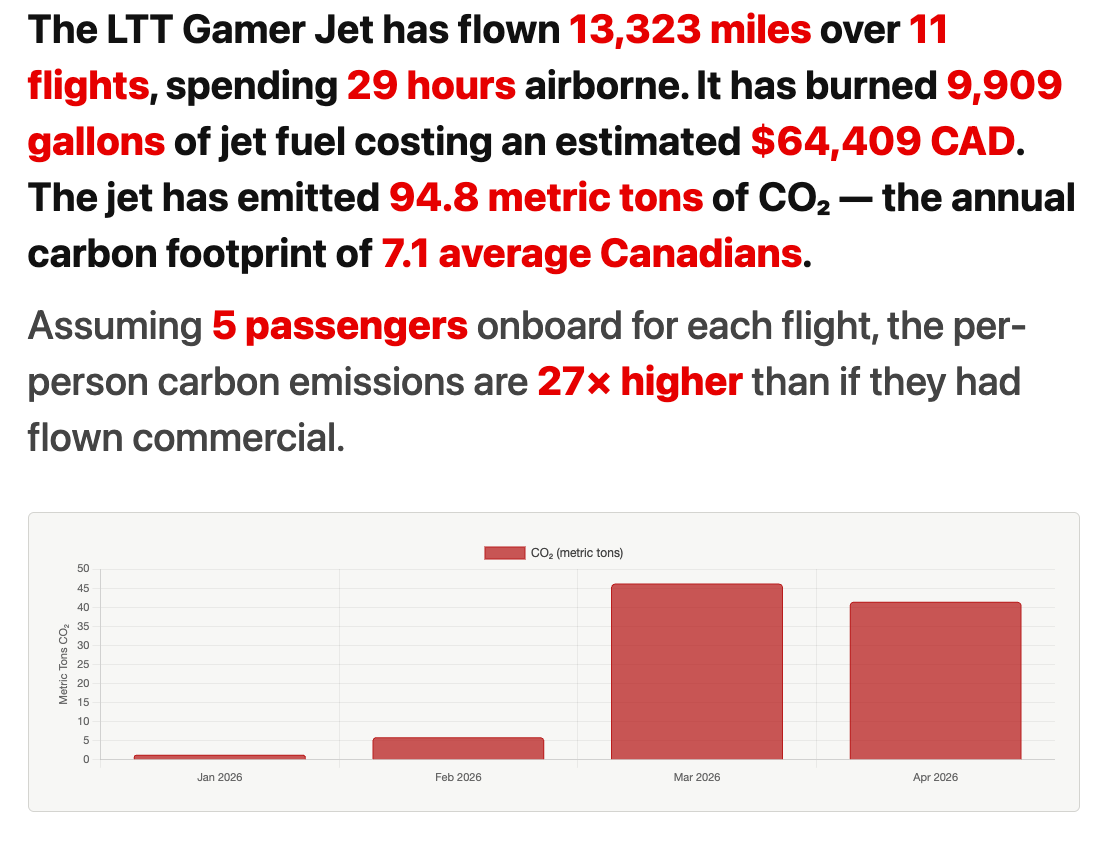

Quantifying the impact of the Linus Tech Tips “Gamer Jet”

Recently the tech YouTuber Linus Sebastian (Linus Tech Tips) bought a private jet and naturally this has unleashed a torrent of hot takes from fans and haters alike. The arguments mostly boil down to whether or not the emissions and subsequent impact on the environment are unreasonable or justifiable. Of course, the arguments are pretty…

-

Photos: Minotaur Rocket Launch

Photos from the NROL-129 mission launch using the Northrup Grumman Minotaur rocket from the Mid-Atlantic Regional Spaceport at NASA’s Wallops Flight Facility in Virginia. Digital Marketing allows you to show your customers what your business is made of and how you can help them with their needs, for that reason if you have business is…

-

Photos: Parachute Jump Training

Photos from an Army training exercise at the sand dunes in West Greenwich, RI.

-

Mohawk trail drone trip

I took a short road trip to western Massachusetts with my buddy Andy. He brought his GoPro Karma drone. I brought my personal camera rig.

-

Taking the Mavic Air to Nova Scotia

Footage from a weekend road trip from Rhode Island to New Brunswick and Nova Scotia, Canada. Filmed on a DJI Mavic Air. Music by Fjordwalker.

-

My professional sound setup for live streaming with Twitch and OBS

I’m a lifelong avgeek and as I’m learning to fly for real, I use my home simulator as a really useful training tool. Occasionally I’ll stream my flights, and because I have a production background I really wanted to have a proper audio workflow. The following setup is exactly what I use to send audio…